The Easy Way to Web Scrape Online Articles

How to gather the latest insights from multiple sources without spending hours? That’s the number one issue among people who scrape news articles to track trends, monitor competitors, and the like. One popular solution is web scraping. However, it’s not without challenges. Anti-scraping mechanisms, IP bans, and formatting issues can turn this simple task into a pretty frustrating one. They can but they won’t if you know the basics.

The industry’s first antidetect browser

Multilogin is the all-in-one solution for multiaccounting with built-in residential proxies in every subscription plan.

Stability trusted since 2015.

Understanding the Web Scraping

Web scraping means extracting data from websites. When scraping Google News, for instance, you automatically collect the information you need from various web pages. Who needs it? Journalists, data analysts, digital marketers, and even academics. They all use news scraper tools for analysis, reporting, or strategic planning.

The key issue here is that websites don’t always play nice. They can detect and block news scraping attempts, often using CAPTCHAs, IP bans, or simply changing their structure. Then there’s the ethical side — not all websites appreciate having their content scraped. So, you need to know the rules to scrape effectively and responsibly.

Web Scraping News Articles With Python

Python is fit for news scraping as it’s powerful, yet straightforward. It’s thus accessible even for those who aren’t hardcore programmers.

Using a library

First, you’ll need a library. Beautiful Soup is a popular choice. It helps parse HTML and XML documents so that it’s easier to extract the data you need.

What does web scraping news articles look like? Let’s take the scraping of headlines. You send a request to the website, parse the HTML response, and extract the text within the <h2> tags with the class headline.

Using a proxy

No, why use a proxy for scraping Google News? First of all, it helps you avoid getting blocked because it distributes your requests across multiple IP addresses. This way, you can scrape more data without raising red flags. If interested, here I review the best proxies for scraping.

To integrate a proxy, you add the proxies parameter to the requests.get() method and route your requests through a proxy server. That’s a pretty reliable way to bypass some (I’d even say most) of the anti-scraping measures.

News Scraping Without Coding

Not a coder? No problem. There are plenty of tools that make scraping accessible to everyone. I’ll review them in the next section and here’s a more or less general plan for what to do to scrape news articles without coding.

Step 1: Choose a Tool

First, you need a news scraper tool that suits your needs. I’d suggest experimenting because it’s the best way to understand what REALLY works for you.

Step 2: Install

A news scraper tool (okay, most of them) is a browser extension or a desktop application. So to install it you just follow the installation instructions from the website. Usually, it’s as simple as downloading and clicking through the setup process.

Step 3: Open the Web Page

Now, go to the website you want to scrape articles from. Open the page with the articles you’re interested in. Ensure it’s fully loaded before you move to the next step.

Step 4: Select the Data

Activate the news scraper. You’ll typically enter a selection mode where you can click on elements you want to extract. Click on the headlines, summaries, or any other data points you need. The tool should highlight these elements to confirm your selection.

Step 5: Customize

As a rule, your news scraper will allow you to adjust settings like

- pagination (to scrape multiple pages),

- set filters (to exclude unwanted stuff),

- and define the output format (CSV, JSON, etc.).

Step 6: Run the Scrape

Once everything is set up, run the scrape. The news scraper tool will automatically extract the target info and save it in your chosen format. This can take a few seconds or minutes (depends on the task scope).

Step 7: Review and Clean

After the scrape is complete, review the results. I’d suggest checking if there are any inconsistencies or missing pieces, in the first place. A good news scraper usually provides a preview.

Step 8: Download and Use

Finally, download your output. You can now use it for analysis, reporting, or whatever else. I’ll cover this in more detail later. Most news scraper tools support exporting to various formats so it should be easy to integrate with other software.

News Scraping Tools

As I said earlier, if you plan to scrape without coding, you normally start by choosing a news scraper tool. The choice is actually quite wide.

Browser Extensions

These are the easiest to use. Browser extensions integrate directly with your web browser. That is, you are web scraping news articles with just a few clicks. They’re perfect for quick, small-scale tasks. However, they might not offer advanced features like scheduling.

Pros:

- User-friendly, quick to set up.

- Ideal for small-scale tasks.

Cons:

- Often lack automation features.

Desktop Applications

These have cool features, including automation and integration. Great for medium to large-scale scraping projects.

Pros:

- Robust features, high customization.

- Suitable for larger datasets.

- Integration with other software tools.

Cons:

- Can be resource-intensive.

- May require some technical know-how.

Cloud-Based Tools

Cloud-based tools run on remote servers — you don’t worry about your own system’s processing power. They often include advanced features like API access, scheduling, and real-time data updates. They’re perfect for continuous news scraping.

Pros:

- Continuous scraping without taxing your resources.

- Advanced features like real-time updates.

Cons:

- Subscription costs.

APIs

A news scraper API is for those with at least some technical know-how. It allows you to interact with a web service to get what you want. APIs are powerful and flexible.

Pros:

- High customization and control.

- Efficient for complex tasks.

- Real-time access (not always but often).

Cons:

- Requires understanding of news scraper API documentation.

- May involve scripting.

Automation Scripts

For the tech-savvy, automation scripts are super useful as they offer the highest customization. They are custom-written programs that automate scraping Google News. They can handle complex tasks, large datasets, and specific extraction needs. Of course, they require programming skills, but they give flexibility in return.

Pros:

- Super custom and flexible.

- Complete control over web scraping news articles.

Cons:

- Requires significant programming skills.

- Time-consuming to set up.

Proxies

I’ve touched upon these when discussing how can Python scrape news articles. Basically, proxies are not standalone scraping tools. I’d rather say they are essential companions for web scraping news articles. They mask your IP address and allow you to make numerous requests. They turn on this “stealth mode” so that your activities aren’t undetected.

I have a list of free proxies here so you can get a feel of how it works yourself.

Pros:

- Prevents IP bans, enhances anonymity.

- Scraping from geo-restricted sites.

- Higher request volumes.

Cons:

- Requires proper configuration.

- Data retrieval may (not necessarily though) be slower.

Note: Issues You May Encounter

News scraping isn’t 100% smooth, of course. Here are some common issues you might encounter.

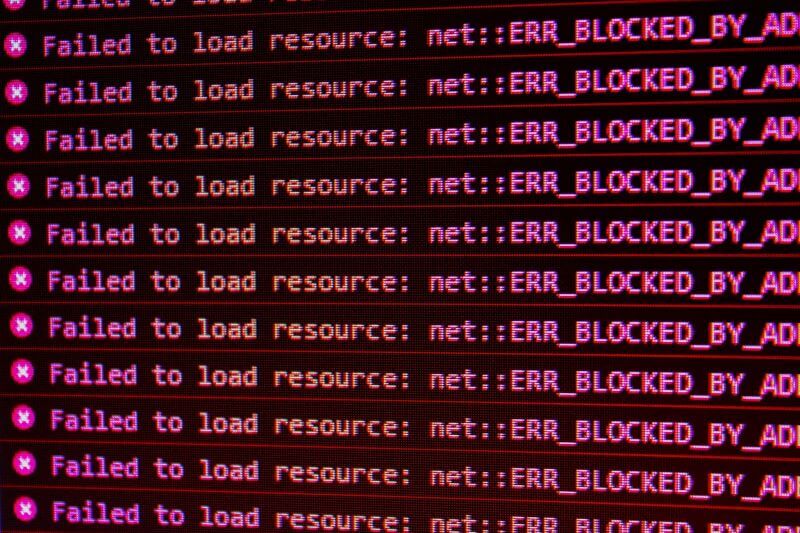

- Anti-Scraping Mechanisms

Many websites implement measures to prevent scraping. You might face CAPTCHAs, rate limiting, or IP blocking.

For example, if you’re scraping too fast, you might see a CAPTCHA pop-up or your IP might get blocked temporarily. That’s actually one of the arguments in favor of using proxies.

- Dynamic Content

Websites often use JavaScript to load content dynamically. Traditional scraping methods might miss this data because it doesn’t appear in the initial HTML response. You’ve probably seen this if you ever scraped a page and found it was missing the latest headlines because they loaded after the initial page render.

- Data Structure Changes

Websites frequently update their layouts and structures. A change in HTML tags or class names can break your scraper. That is, today, headlines are in <h2> tags with the class headline. Tomorrow, they might be in <div> tags with a different class.

Extracting and Parsing Data

Once you’ve overcome the initial hurdles, you normally extract and parse the data.

HTML Parsing

After fetching the web page, you need to parse the HTML to extract the desired data. Tools like the aforementioned Beautiful Soup or lxml are commonly used for this.

Let’s say you have a web page with headlines inside <h2> tags with a class of headline. Using a parser, you can target these tags and extract the text within them.

Handling Dynamic Content

For sites with dynamic content, tools like Selenium can help. Selenium interacts with the web page as a real user would. It allows you to wait for JavaScript to load the content.

For example, on some sites, headlines may not be present in the initial HTML but are added by JavaScript after the page loads. With Selenium, you wait for these elements to appear and then extract the content.

Understanding AJAX

Many modern websites use Asynchronous JavaScript and XML to load content without refreshing the entire page. This can make scraping a bit trickier. What you need might not be present in the initial HTML response. To scrape such content, I’d suggest simulating the AJAX requests. Alternatively, you can use tools that handle asynchronous operations (e.g., specific APIs).

How to Store Scraped Data

So you’ve extracted the content and now you need to store it efficiently.

CSV File

CSV files are simple and effective (especially for storing structured data). They are compatible with many applications, including spreadsheet programs like Excel. What you actually do is

- create a CSV file,

- write your data into it,

- and save it.

This format is great for tables with rows and columns. For example, if you have a list of headlines, you can save them into a CSV file. Each headline will be a new row.

Database

For larger projects, database storage is a bit more suitable. Here, you can

- create tables,

- insert your extracted data into these tables,

- and then query the output as needed.

For instance, you create a database with a table for headlines. Each headline is stored as a row in this table. You can perform searches and analyses later.

JSON Format

If you need a flexible format that supports nested data structures, JSON is a good choice. JSON files represent data as key-value pairs. They are ideal for web applications and APIs.

How to Use Scraped New Data

Finally, what’s so valuable about scraped data? If you were reading this, you probably know how to make it work for you. Just in case though, here are a few ideas for what to do with it.

- Trend Analysis — spot emerging topics and predict future trends.

- Competitive Analysis — gain insights into your competitors’ strategies.

- Content Creation — understand what topics are currently trending.

- Sentiment Analysis — determine the overall tone towards a specific topic.

- Research and Reports — support your findings with real-world data.

FAQ

Generally, yes. Scraping publicly available data isn’t illegal, but it’s important to respect the website’s terms of service.

Yes, you can. Browser extensions and cloud-based tools are particularly good for non-coders.

Use proxies to distribute your requests across multiple IP addresses.

For beginners, browser extensions are ideal. If you have coding skills, Python libraries are great.

Yes. Sending too many requests in a short period can slow down the site or even cause downtime.